Notes on Rowan Engineering; Or How to Vibe-Refactor a Codebase

stuck in Rowan's dependency slough of despond; fleeing the complexity of microservices & partial refactors; multiplying packages to reduce complexity; using agents to vibe-refactor our whole codebase

Eagle-eyed users of Rowan may have noticed some changes in our logfiles, and Substack readers may have noted a decrease in the number of new features that we’ve shipped recently. We have been in the process of switching our workflow-running backend from a single monolithic package to an amalgamation of library- and workflow-specific packages. We recently shipped updated versions of over half of our workflows, with the rest soon to follow.

This post explains why we’re doing this: the technical problems we’ve encountered in scaling Rowan, the various solutions we considered, and the approach we’ve ultimately settled on. This is a longer and more engineering-focused post than most of our writing, but we hope it will be useful to anyone who is interested in building complicated systems, whether virtual or physical.

The problem

When we started building Rowan’s workflow backend, things were simple. We only had a few packages and we shared functionality between workflows to reduce the size of our codebase, ease maintainability, and increase developer velocity. We utilized modern development methods such as Ruff for formatting and linting, mypy for type checking, and Pixi for package management, ensuring that we had fast and reproducible builds. Any time we needed a new package we would add it, and over the past two years our codebase has grown significantly.

This growth came at a cost. Computational chemistry is a varied field requiring niche chemistry, physics, and math expertise to develop highly performant code. This code is often written by grad students with little software development expertise and no funding to perform continued maintenance. This has lead to an incredibly fragmented ecosystem with many orphaned packages being pinned to outdated dependencies, promiscuously importing numerous packages for minor tasks, or not fixing major bugs that have been open for many years.

As we added packages (currently 151 direct dependencies and innumerable indirect dependencies), solving for an acceptable combination of packages and their dependencies became incredibly difficult. Conflicting PyTorch and CUDA versions in packages are a nightmare to deal with; some packages only support up to Python 3.12 (despite the end of full support for Python 3.12 happening over a year ago) and some packages are arbitrarily hard pinned to specific versions of packages. Updating or adding new dependencies can upset the careful balance, causing certain packages to accidentally regress, or preventing the solution entirely. This lead us to purposefully avoid adding certain features (e.g. certain NNPs, periodic DFT, transition state search packages, etc.) due to the complexity that they introduced.

The monolithic architecture of our codebase also came at a cost, as it encouraged a tangled web of imports between parts of the code that:

slowed startup times, as the entire codebase is traversed following imports (even the anticipated explicit lazy imports in Python 3.15 are not a complete solution),

made it difficult for mypy to type check everything due to the codebase’s size and complexity (taking a minute on a fresh clone of our repo), and

complicated refactors, as developers (or their agents) had to be careful to follow every import to ensure that changes didn’t break things.

So far, we have been able manage some of this package complexity by outsourcing GPU-related complexity to images on Modal. This avoided developers needing to have CUDA-capable laptops, and allowed different GPU-related dependencies for each Modal image that was deployed. However, it was difficult to maintain consistent images, as the snarl of imports from our monolithic codebase ended up importing everything into our Modal images. Additionally, we no longer had fully atomic deployments and couldn’t use uv.lock files to ensure the Modal images didn’t drift, preventing rollback if something went wrong. The dependency on Modal to help out our mess also made it difficult to deploy our platform to our customer’s private clouds.

Potential solutions

All of this was slowing down our development velocity. There were many potential solutions: a partial refactor, excising of problematic dependencies, or leaning more heavily into microservices for new features. However, none of these options provided the necessary base for future growth, particularly if we want to be able to:

Ship fast

Have atomic deployments

Support a wide range of features

Not rewrite everything ourselves

We have performed many partial refactors throughout the growth of our codebase (developers should always be reforming), but partial rewrites cannot deal with the fundamental problem of a monolithic codebase nor the fact that we have to deal with fundamentally conflicting packages. We’ve also often excised dependencies (occasionally rewriting them internally) to simplify our dependency graph, but attempting to rewrite all packages that cause us problems is a great way to introduce bugs and never get anything done.

Many companies solve this problem via microservices where each fundamental operation becomes its own server (typically through containerization) and a single end-to-end workflow calls many of these microservices in an organized manner. This fundamentally solves the dependency issue, since each microservice just has to expose a REST API and can use whatever dependencies it wants under the hood. There are a variety of computational chemistry projects that use this as a solution, including FireWorks, atomate2, and AiiDA.

We disliked this for several reasons, however. Many Rowan workflows combine a cascade of scientific functionality: protein–ligand co-folding can utilize conformer search, geometry optimization, co-folding model inference, pose deduplication, PoseBusters scoring, and strain calculations all run in sequence. Splitting this entire workflow across network boundaries dramatically increases the complexity and fragility of the workflow by introducing a bunch of new potential failure modes.

Basically, we wanted to be able to isolate dependencies by workflows without having to completely fragment our runtime deployment.

Our approach

We decided to perform a complete rewrite of our scientific backend. This is not something we took lightly; rebuilding takes significant amounts of time and can often fall afoul of the second system effect. However, when done correctly it can accelerate future developer velocity and cut many of the Gordian knots that existed in the prior system that were intractable via traditional refactors.

We architected our new package (called Condor) into multiple distinct layers: core, libraries, and workflows, all in the same monorepo with their own lockfiles. In both the libraries and workflows there are numerous distinct packages, each focused on performing a singular task. Imports between libraries are discouraged to prevent complicated webs from forming. Judicious application of factory-based lazy loading of modules with local imports further helps to segregate modules and provide a consistent interface while limiting the number of packages loaded in each workflow.

Core contains our fundamental datatypes and algorithms. It provides numerous abstract base classes (e.g. for quantum-chemistry engines) that get concrete implementations in other packages (e.g. the psi4_engine library). It is available to all libraries and workflows, and as such contains a minimal number of dependencies (e.g. numpy, polars, more-itertools, stjames).

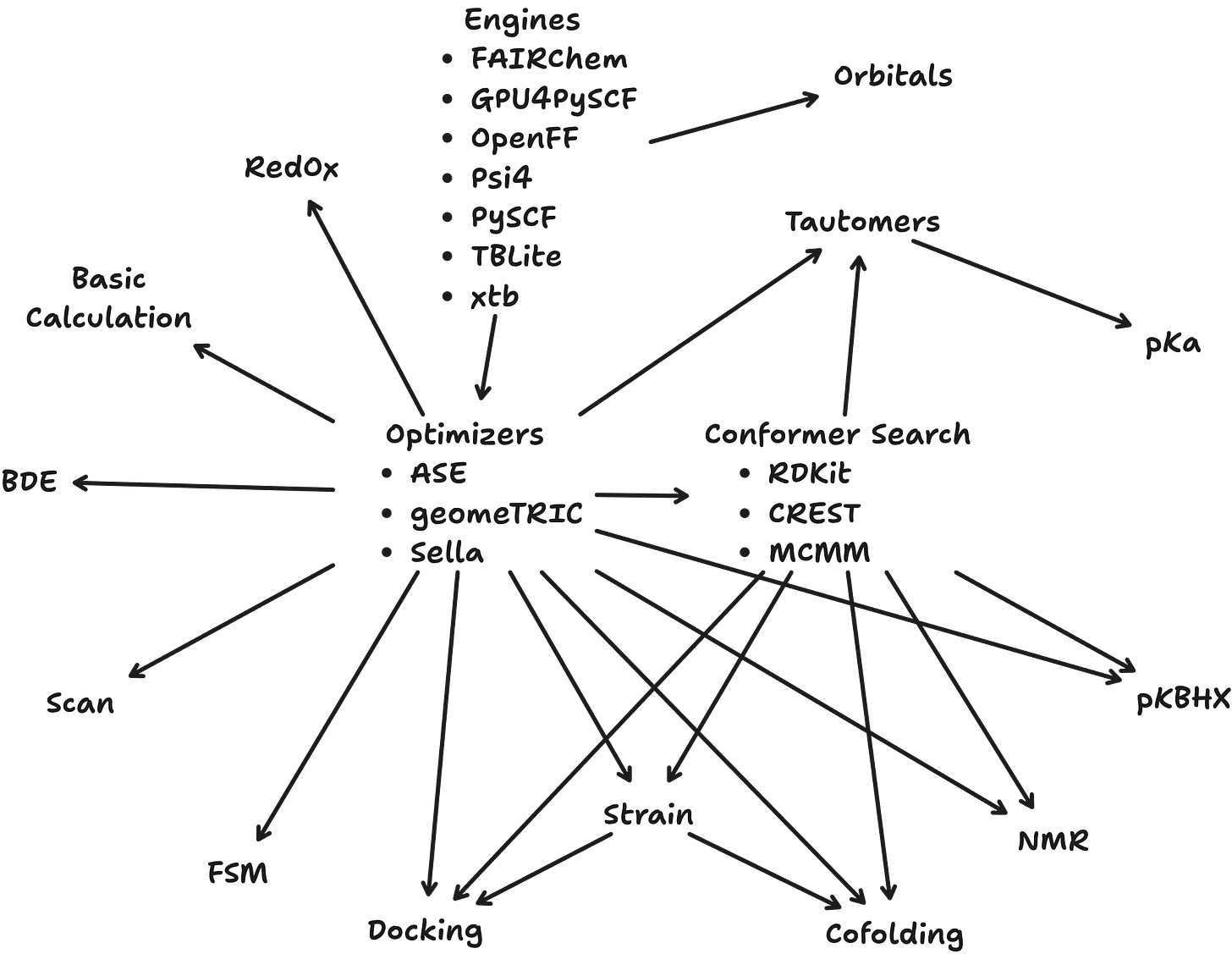

Condor’s internal libraries are where most of the work happens. Each one is dedicated to a single conceptual task and imports from core. There are internal libraries for interfacing with every quantum-chemistry code that we use (e.g. Psi4, xTB, GPU4PySCF), every conformer generator (e.g. RDKit, openconf, CREST), and every optimizer (e.g. geomeTRIC and Sella). Separate packaging simplifies testing and development, while also helping to restrict cross-cutting imports that lead to numerous transitive dependencies. It is now obvious if an import happens between libraries and is significantly easier to limit to situations where it is strictly beneficial (e.g. our PySCF and GPU4PySCF engines are distinct packages to isolate the base PySCF from GPU-related dependencies, but the GPU4PySCF engine reuses much of the PySCF engine code).

Workflows are the interface to the wider world. They translate the logic in our StJames workflows to a form that Condor can understand. They manage the conversion of settings, the setup of saving callbacks, and the management of the tasks queue. Workflow packages in Condor aren’t intended to contain significant scientific logic; instead, they orchestrate the running of libraries and present a consistent API to the outside world. This allows us to refactor internally while maintaining a consistent interface for our users.

Each package has its own set of tests. Tests related to a commit’s changes are run on the package containing the changes and all downstream packages. Since they are independent packages, the tests can all run independently, allowing for massively parallel testing and accelerating developer velocity and bug fixing. On pushes to our master branch we sometimes have 50 independent GitHub Actions workflows running. The vast majority of them complete in a couple of minutes, keeping our bills down, and the strict partitioning of our code helps us worry less about integration problems (though we do have many integration and regression tests).

Now that each workflow is independent of the packages needed in other workflows, we can quickly ship updates and new workflows that would previously have caused conflicts. Since all of our code is in a monorepo, we don’t have to worry about conflicting internal versions and can have atomic updates every time we deploy (which is often multiple times per week). Should a change ever need to be rolled back, we can do so nearly instantly, as we have a full snapshot of the exact state of our system thanks to the lockfiles.

How we implemented it

This was a significant undertaking, and one we debated for a long time, but something we ultimately decided was necessary in order to maintain high-quality, performant software. We separated the work into three stages: ideating and investigating, scaffolding and automating, and implementation and testing.

First, we spent a couple of months thinking about the problems with our existing architecture and code and playing around with toy versions of the new architecture to test ideas. We were under no pressure to ship a new backend, and this allowed ideas to percolate and for us to play around with setups without committing to anything. There is a dearth of examples of how to set up large projects for the smaller-than-Google but bigger-than-single-package scale. We aren’t big enough to truly benefit from most build systems like Pants and Bazel, and they come at a heavy cost to developer speed. The initial stage of planning convinced us that modern environment- and package-managers like uv and Pixi along with a judicious use of GitHub Actions can be used effectively in place of a dedicated build system. This initial investigation phase convinced us of the need for a major rewrite and that it would be possible to do so in a straightforward manner.

Second, we threw out all of the previous toy code and started from scratch. We scaffolded a framework, wrote automation scripts, and developed high-quality agent skills. We hand-wrote much of the core code to ensure that future development would be fast, correct, and consistent. We set up numerous scripts for automating package setup, tests, and deployment so that we could maximize consistency (and thus decrease the mental load on developers). We spent large amounts of time writing numerous examples of good docstrings, good code hygiene, and what not to do, so that developers and agents would know how to write code when the time came.

Third, we all pitched in and ported code. In classic software-development style, developers were assigned workflows based on their expertise, and shepherded them through the development, testing, and deployment phases. Coding agents helped with porting the code quickly, but developers were still responsible for making sure the code was correct and in line with Condor’s paradigm. Multiple developers were assigned to review and test each new workflow. In doing so we caught many bugs, some of which were latent in the previous code, and significantly improved the quality of code. We committed to minimizing the scientific changes between the codebases, only doing so where clear improvement could easily be achieved, or where there was an obvious bug. This helped prevent work from spiraling out of control, as there are always ways that code can be improved. Through this process we were confident that we were shipping code that was better than before, and that it will serve as an excellent base for building in the years to come.

Updates

During this process we took the liberty of updating many parts of our codebase.

We’ve updated our developer toolset to accelerate development and improve correctness. We now use ty for type checking and do so at the package level, taking a few seconds at most. We use rumdl for formatting our markdown consistently. We use prek for managing pre-commit hooks, using nested prek.toml files in each package so that our lints and type checking run quickly on every commit (our hooks only take a few seconds thanks to the speed of Ruff, ty, and rumdl). Packages are managed by either uv or Pixi depending on the need for conda-forge packages (uv is preferred but sadly doesn’t yet support conda packages).

We’ve improved our test coverage and made more logical tests. Not everything needs to be tested at the highest level of theory; g-xTB gradients are just as good as DFT gradients when testing our optimizer code, while significantly less costly. The logical separation into packages allows us to more easily mock out parts that are irrelevant to the tests (e.g. the conformer search workflow can have its conformer generation code replaced by a pre-existing ensemble in tests of the clustering and pruning steps). While mocking code is traditionally difficult to write, agentic coding makes this easy.

Tests are marked with how long they are expected to take (seconds, minutes, hours), allowing us to quickly run a subset of tests to gain a quick understanding if anything broke, and then run longer running tests before merging to master. We’ve even compiled macOS binaries of many executables that were Linux only. These are automatically swapped out at runtime on the machines of our Mac developers so that they can run our test suite locally instead of having to rely on Docker images or our CI/CD pipeline to test.

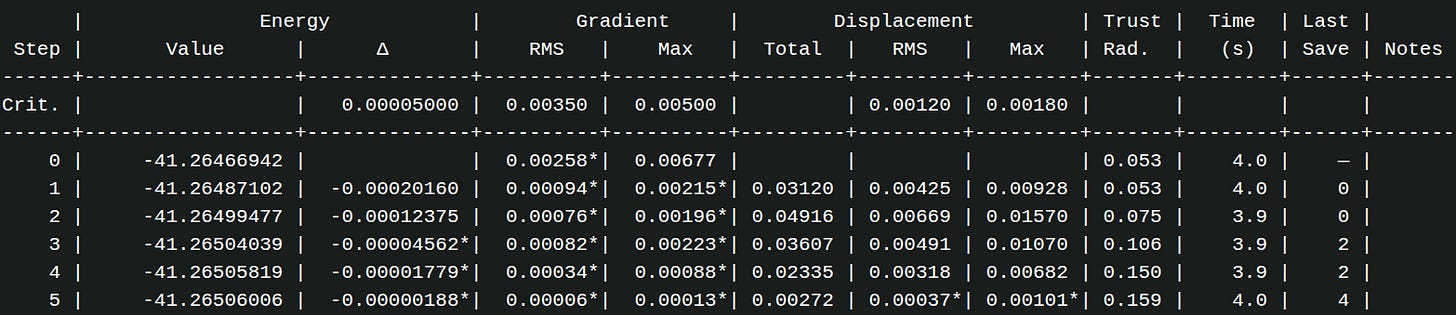

We have rewritten how we perform optimizations. The TRIC coordinate system is now used for much larger non-periodic systems than before (previously we switched to Cartesian for all fast methods). Though each step is a bit slower than Cartesian due to the need to perform coordinate transformations, the quality of each step is much better. This allows for faster overall optimizations due to the optimizations taking fewer, better steps, along with faster loading of results for our users. We now batch database-save callbacks during optimizations to avoid hammering our database with multiple step saves every second and use futures to perform the saves, preventing database communication from slowing down optimizations while still allowing users to follow the progress of their calculations.

We’ve updated GPU4PySCF to the latest version, providing significant improvements. The new JoltQC library in GPU4PySCF provides JIT-compiled kernels that normally take several minutes for every new calculation, so we warm up the kernels at image-build time, providing faster starts for our users’ jobs instead of the multi-minute JIT compile times needed previously. If the Modal GPU image is cold, startup should now be <30 seconds, and <1 second if the image is warm.

We’ve improved our logfiles, providing cleaner, more relevant information. Logfiles are a great way to gain a greater understanding of what is happening during a calculation and ensure that things are running as expected. Not all information is saved to our backend in a structured manner, but we think it is important to give users access to data that can be useful for debugging calculations. Our updated logfiles now display more data in an organized manner. We better suppress the spurious output of many of our dependencies, and surface more relevant information for the user. We’ve even added resource tracking to the logfiles for some workflows, allowing for a better understanding of what resources were needed to run a given calculation and how close you are to the resource limits on a machine.

We are improving our security and portability by integrating more tightly with AWS. We are switching our application-layer hosting from Digital Ocean to AWS, keeping communication between our application layer, scientific workflow layer, and database all within our AWS VPC. This will make it much easier to deploy our platform on our customers’ virtual private cloud instances, decreasing the engineering lift required to do so.

We’ve added better recovery from errors. Random segfault sometimes occur in programs like CREST for no apparent reason, and the executable is now automatically restarted when a segfault is detected. For issues like out-of-memory errors that can happen in large DFT calculations, we now handle them more gracefully, properly shutting down the image with a useful error message. (If you need to run very large DFT calculations on our infrastructure, please contact us for some tips and possibilities.)

Lastly, we’ve updated our units to the latest CODATA 2022 SI values. This should have minimal impact on calculations, with the biggest change being in the eV to Hartree conversion value. If you are comparing absolute values of calculations for AIMNet2, OMol25’s eSEN conserving small, or UMA models, you can multiply old energy values by 1.00000050545 to match the new values (this is important if you calculated your reactants and transition states at separate times).

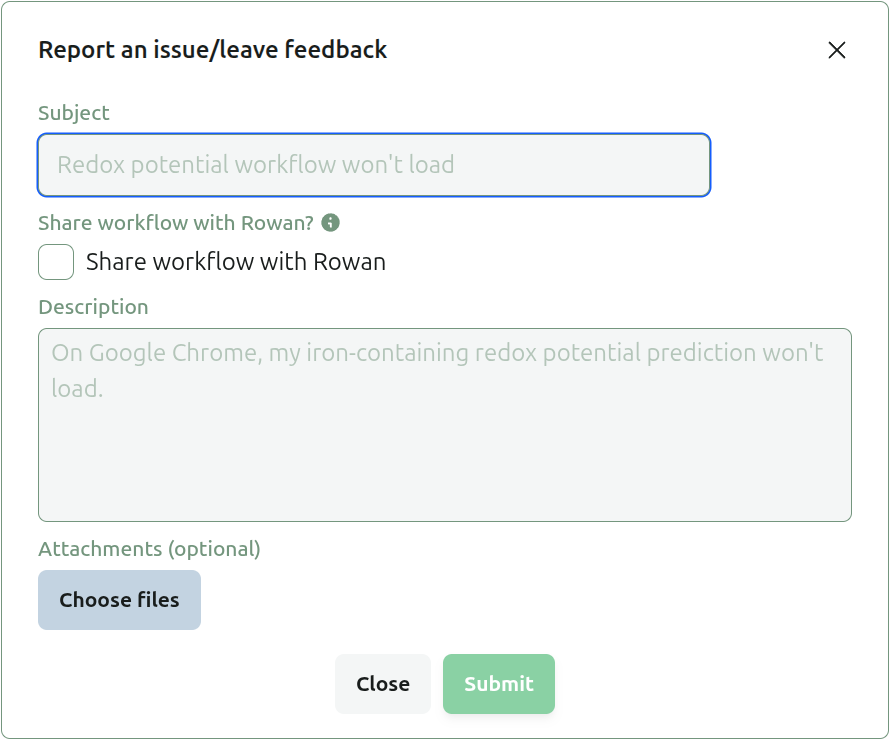

As we transition our workflow backend, you may experience some bugs (though we hope to keep these to a minimum). If you notice anything is wrong, doesn’t work as expected, or could be improved, please let us know. You can report bugs by clicking on the bug icon in the top right corner of the screen. This will pop up a box where you can enter the issue you are seeing, and optionally share the calculation privately with us (we are unable to view your calculations unless you explicitly share them with us due to security considerations).

To write a good bug report, summarize the problem you are having in a single sentence in the subject line and then write a more detailed description about what you are attempting to do, the result you are seeing, and the result you expect to see in the description section. E.g.

Subject: Scan points not loading during run

I submitted a scan over the C–C–C–C dihedral angle in butane, but no points are displaying until the entire scan has completed. I expect to see individual scan points populate as they complete.

Well-written bug reports help us to quickly diagnose and fix problems (occasionally even the same day), and are very helpful as we continue to grow our platform.

We plan to add many new features and updates, and are looking forward to a summer of building new features and workflows. We will be joined by four interns this summer to work on everything from periodic DFT, to educational materials, to MD, to conformer search. If there are workflows or features that you would find useful, please let us know.