TeraChem Now Available On Rowan

GPU fundamentals; adapting quantum chemistry to GPUs; how to run TeraChem

We’re excited to share that TeraChem is now available on Rowan! Developed by Todd Martínez and co-workers at Stanford, TeraChem uses graphical processing units (GPUs) to accelerate quantum chemical calculations. Although lots of applications today rely on GPU acceleration, including molecular dynamics and machine learning, TeraChem was one of the first applications of GPUs to scientific computing: early TeraChem work even predated the development of NVIDIA’s CUDA platform for GPU programming.

Why is this so powerful? As the name implies, GPUs were originally developed for 3D graphics: determining the color of each output pixel requires a set of somewhat complex computations for 3D graphics, and the computer must run (# pixels / frame) * (# frames / second) of those calculations each second. Conventional CPUs aren’t great at this sort of parallel computation. Each core can essentially only run a single instruction per clock cycle, which means that running millions of identical computations on different data takes millions of times longer.1

GPUs, in contrast, follow the “single instruction/multiple data” paradigm, where a single operation is applied to an entire array of input data at once. This makes them crazy fast for certain sorts of operations, but also makes algorithm design and implementation much more complex. (For a deep dive, see this article on implementing efficient matrix multiplication in CUDA.) In particular, the GPU needs to be able to run identical computational steps for every piece of input data, or costly “warp divergence” can occur.

While “running quantum chemistry on GPUs” might sound obvious in hindsight, figuring out how to adapt the requisite algorithms to GPU architecture is no mean feat, and indeed many papers have come out of this work (1, 2, 3, 4, 5, 6).2 Here’s what Todd Martínez had to say in a 2014 Nvidia interview:

We reformulated all the algorithms of quantum chemistry to map well onto GPU architectures. This implies a fair bit of precomputing to arrange the data so that the GPU can process it effectively. Because of this, TeraChem takes advantage of spatial locality more efficiently than other quantum chemistry packages.

Practically, what this all means is that TeraChem is substantially faster than CPU-based quantum chemistry packages. Even with a single relatively old GPU, TeraChem can compute a B3LYP-D3/6-31G(d)/CPCM(water) optimization step for vancomycin (176 atoms, 760 electrons) in just a couple minutes! (Here’s the link to the optimization, which I ran yesterday.)

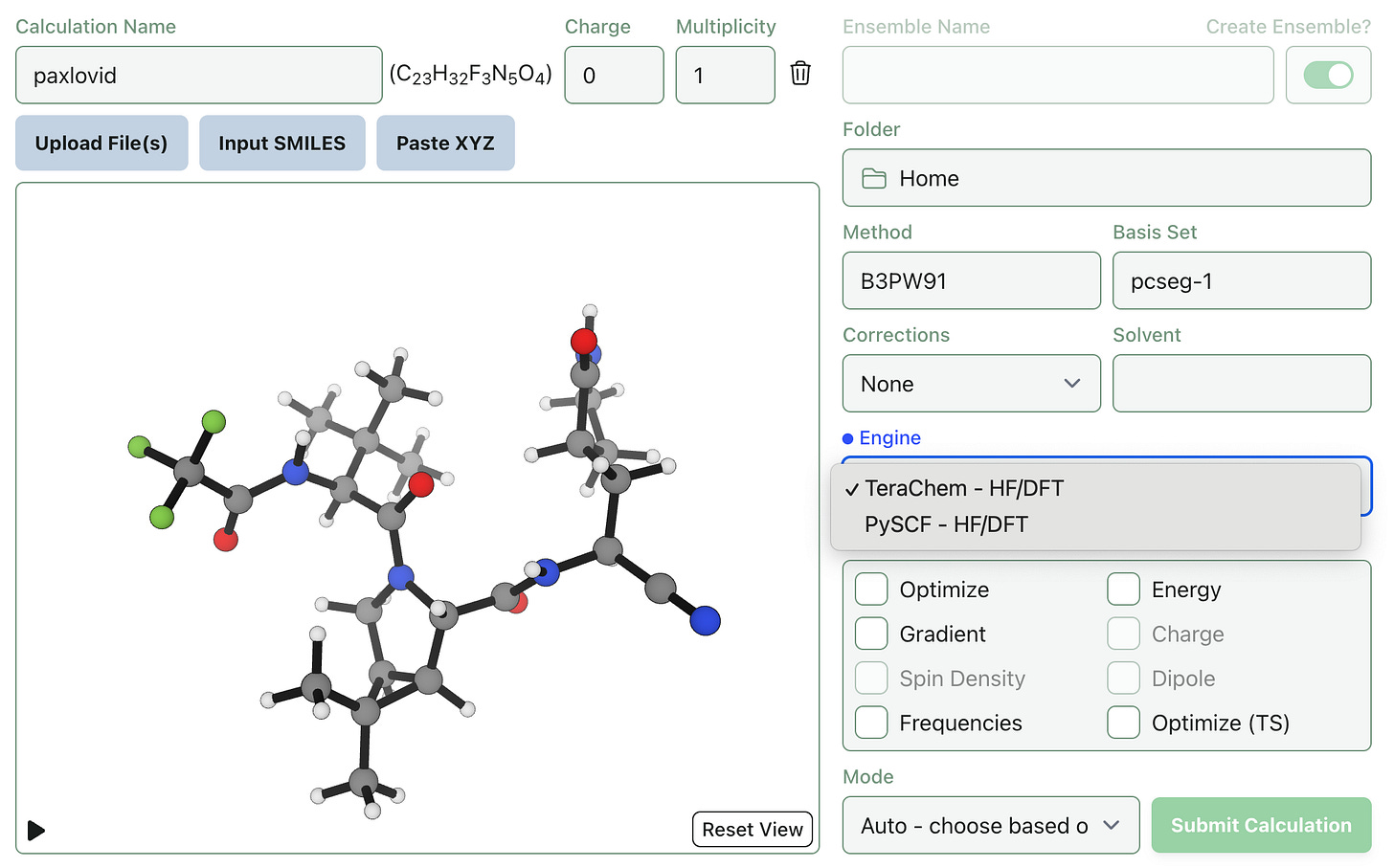

We’re excited to partner with the Martínez Group to bring TeraChem to Rowan.3 As always, you can start running TeraChem calculations through the same interface—just choose between “PySCF” and “TeraChem” when submitting a Hartree–Fock/DFT calculation:

Happy computing!

If you’re reading this and thinking “what about AVX-512 or SSE2 or cache locality,” then this high-level overview is probably not for you.

At a high level, there are a few big problems. Efficient calculation of electron-repulsion integrals typically requires recursive “recursion relations” which involve conditional logic, making straightforward implementations poorly suited to GPUs, and the contraction of these integrals is also pretty complex. Also, most GPUs are much faster at single-precision arithmetic (float/FP32) than double-precision arithmetic (double/FP64), but ERI precision typically needs to be within 10-10 or so. The solutions to these problems, disclosed in the above papers, are quite clever.

A nice illustration of the scope of the challenge comes from a 2010 C&EN news article:

In contrast to classical molecular dynamics research, the field of quantum chemistry is not quite far enough along in its incorporation of GPUs to publish application-based papers. "The quantum chemists have it hard" when it comes to GPUs, [John E.] Stone says. "Their algorithms are very involved, and there are a lot of different ways of doing things." So it's not surprising that many researchers in the community were originally quite reticent to invest time and energy in rewriting their algorithms to run on GPUs.